AI News Roundup: Meta Pays for News, Perplexity Gets Hacked, and AI Leaves the Cloud

Meta is paying News Corp $100M to train on journalism. Perplexity's browser just got caught with a nasty security flaw. Agentic AI is moving on-prem. And a new study says consumers aren't experimenting with AI anymore, they just use it. Four stories from one day that tell you where we actually are.

Four stories crossed my feed today that, taken together, paint a pretty clear picture of where AI is in March 2026. Not where the hype cycle says it is. Where it actually is.

Let's get into it.

Meta pays $100M to train on journalism

Meta signed a deal with News Corp worth up to $100 million for content licensing. The idea is straightforward: Meta gets high-quality training data from News Corp's publications (Wall Street Journal, New York Post, a bunch of Australian outlets), and News Corp gets paid for it instead of having their work scraped without permission.

I have mixed feelings about this.

On one hand, good. Journalists deserve compensation when their work trains systems that will compete with them for attention. The "fair use" argument that AI companies have been leaning on was always thin. You can't build a product that summarizes news articles and then claim the articles weren't important to your product.

On the other hand, $100M sounds like a lot until you realize Meta made $164 billion in revenue last year. This is a rounding error to them. And it sets a price floor that smaller AI companies can't afford, which means the big players lock up the best training data and everyone else gets the leftovers.

The journalism-AI relationship is going to stay messy for a while. This deal doesn't resolve anything. It just establishes that money will change hands while both sides figure out the actual terms.

Perplexity's Comet browser is a security disaster

Here's one that should worry you if you've been using Perplexity's Comet browser. Security researchers found a flaw that exposed users to data theft. Not a theoretical vulnerability. An actual, exploitable security hole in a browser that's supposed to be the "AI-native" replacement for Chrome.

I keep going back and forth on Perplexity as a company. Their search product is genuinely useful. I use it daily. But they keep stepping on rakes. The plagiarism accusations last year. The publisher lawsuits. And now their browser, the product they positioned as their big platform play, ships with a hole big enough to drive a truck through.

If you're trying to convince people to switch from Google, you cannot afford security incidents. Trust is the single hardest thing to build in browsers. Mozilla spent decades building it. Google spent billions. Perplexity can't just ship fast and fix later when people's browsing data is on the line.

I'd pull Comet off my machine until they publish a full post-mortem. Not a PR statement. An actual technical breakdown of what went wrong, how long it was exposed, and what they've changed in their security review process.

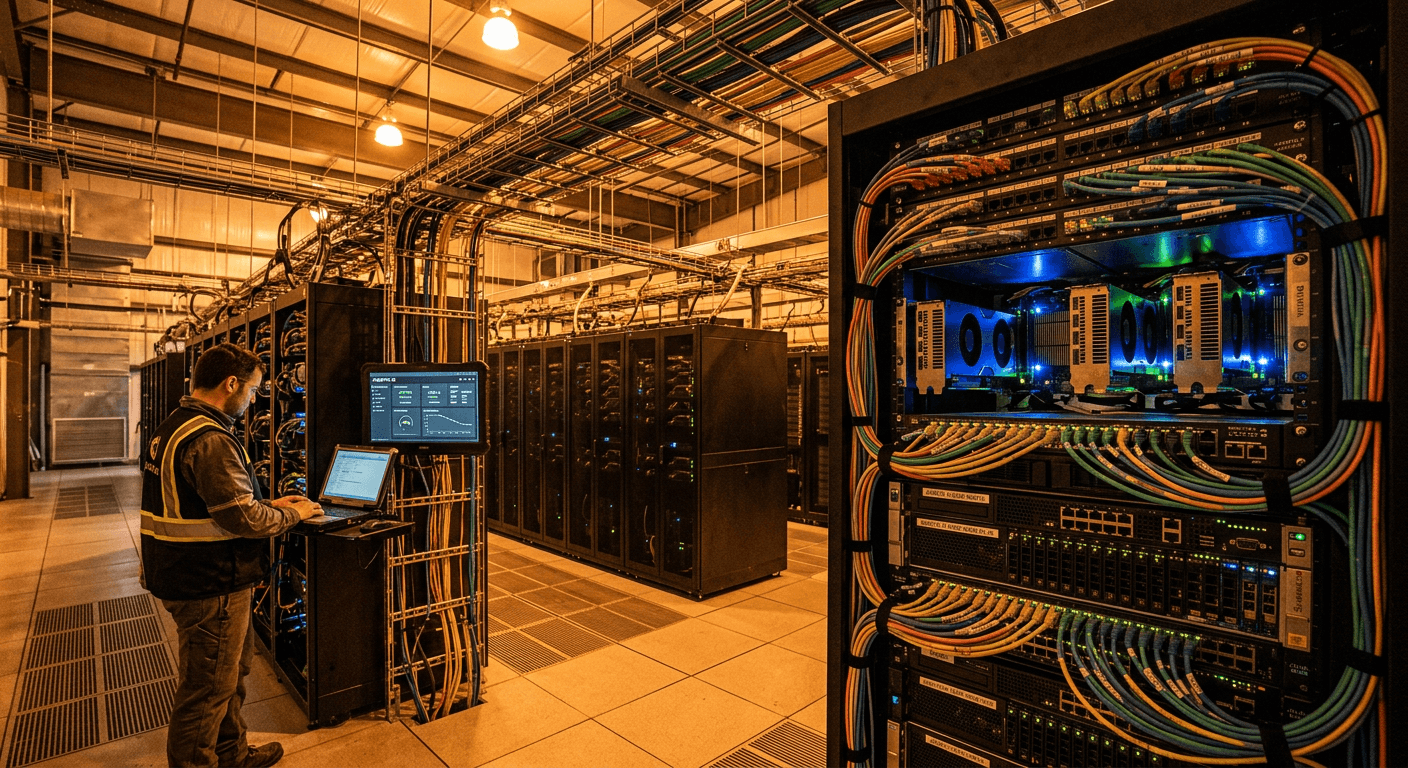

Agentic AI is leaving the cloud

This one's been building for months but 2026 is the year it became undeniable: agentic AI workloads are migrating off the cloud. On-prem and edge deployments are gaining serious traction.

The reasons are practical. Latency matters when your AI agent is making decisions in real-time. Bandwidth costs add up fast when agents are chatty. And a lot of enterprises looked at their cloud bills for 2025, did some math on what agentic workloads would cost at scale, and decided they'd rather buy hardware.

There's also the data governance angle. When your AI agent has access to internal systems and customer data, plenty of companies don't want that traffic leaving their building. I don't blame them.

What I find interesting is how this mirrors earlier compute cycles. Cloud was supposed to be the final destination for everything. Then it turned out that some workloads are cheaper, faster, or safer to run closer to where they're needed. We went through this with CDNs, with IoT, with video processing. AI is following the same gravitational pull.

The winners here will be companies that make on-prem AI deployment not terrible. Right now it's still painful. You need the hardware, the cooling, the expertise, the tooling. Nvidia is laughing all the way to the bank, obviously. But there's a real opening for the companies that can make edge AI as easy to manage as a cloud deployment. That problem isn't solved yet.

AI isn't new anymore

A PYMNTS study came out this week that confirmed something I've been feeling: consumers have stopped "trying" AI. They're just using it. It's not a novelty anymore. It's infrastructure.

This matters more than it sounds.

When a technology moves from "I should try this" to "I already used this three times today and didn't think about it," that's the adoption curve tipping. We're past the point where AI needs to prove it's useful. The question now is which AI products become the default, and which ones get forgotten.

The study found that people are using AI for writing, shopping, research, and planning without making a conscious decision to "use AI." It's just part of how they get things done. Email went through the same transition. So did smartphones.

I think this is going to have a bigger effect on the industry than most people realize. When users stop caring about the AI label, they start caring about the product quality. The novelty premium disappears. You can't sell "powered by AI" anymore. You have to sell something that actually works better than the alternative.

What connects all of this

Training data has a price now. Moving fast still breaks things that shouldn't break. The cloud isn't the only answer for AI compute. And regular people have already moved on from being impressed.

AI in March 2026 is being negotiated over, deployed on physical hardware, failing at basic security, and used without a second thought by millions of people. That's not hype. That's Wednesday.

Was this article helpful?

Newsletter

Stay ahead of the curve

Get the latest insights on defense tech, AI, and software engineering delivered straight to your inbox. Join our community of innovators and veterans building the future.

Discussion

Comments (0)

Leave a comment

Loading comments...