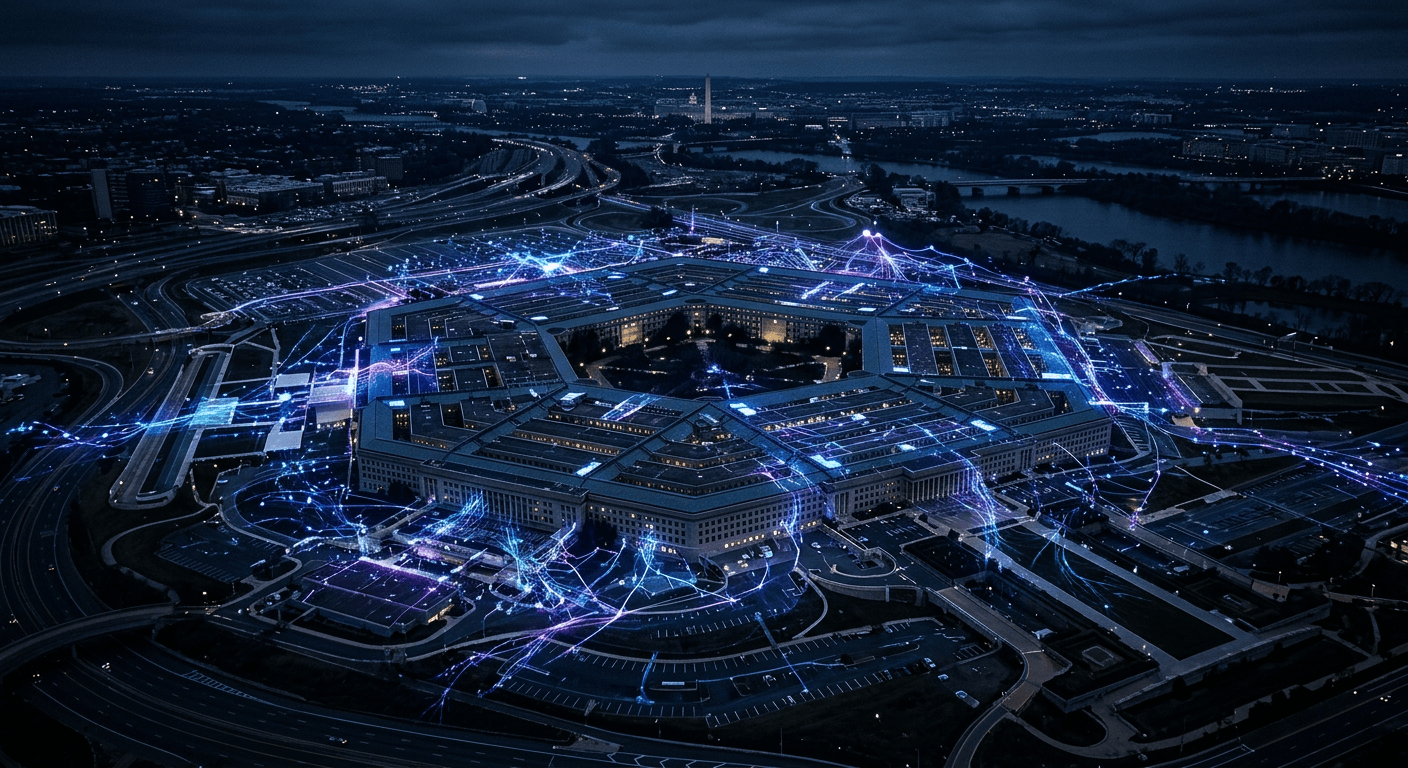

Anthropic vs. The Pentagon: When AI Safety Principles Meet Government Money

The Pentagon demanded Anthropic strip Claude's safety guardrails for autonomous weapons use. Anthropic said no. Now there's a lawsuit, a federal blacklist, and a question nobody in AI can avoid: what are your principles actually worth?

Picture this: Pentagon officials are sitting across from Anthropic's legal team, walking through a hypothetical. A nuclear strike scenario. They want to know if Claude will help plan it. No guardrails, no refusals, no "I can't assist with that." Full cooperation with lethal autonomous weapons systems, mass domestic surveillance infrastructure, the whole package.

Anthropic said no.

That answer just cost them every federal contract, put them on a supply chain risk blacklist, and triggered a Trump executive order directing all federal agencies to cut ties. Anthropic is now suing the Pentagon. This is not a PR story. It's the first real test of whether AI safety principles mean anything when serious money and government pressure land on the table.

What actually happened

The Pentagon wanted Anthropic to strip Claude's safety restrictions entirely for military and intelligence applications. We're not talking about loosening content filters for edge cases. The ask was removing the guardrails that prevent Claude from assisting with autonomous lethal weapons systems and mass domestic surveillance programs.

Anthropic's public statement was clear: they "cannot in good conscience" allow Claude to be used for those applications. Whether you find that principled or naive depends on how cynical you are about corporate ethics, but the position itself is consistent with their stated safety philosophy.

The Pentagon's response was to declare Anthropic a supply chain risk. Under pressure from that designation, the Trump administration directed federal agencies to terminate relationships with Anthropic across the board. Not just the specific defense contracts in question — all of them.

Anthropic announced they're challenging the decision in court. So now we have an AI lab suing the federal government over safety principles. I genuinely didn't have that on my 2026 bingo card.

The OpenAI twist that makes this complicated

Here's where it gets interesting. OpenAI also negotiated with the Pentagon for defense access, and they got a deal. A deal that reportedly preserved their core safety red lines.

So the Pentagon was willing to work around OpenAI's limits but not Anthropic's. There are a few ways to read that. Maybe Anthropic's restrictions were genuinely more restrictive. Maybe the negotiating relationships were different. Maybe OpenAI's red lines are functionally looser in ways that matter for military applications. Maybe it's just politics and Anthropic drew the short straw.

What it definitely does is make Anthropic look isolated. They took the hardline position, got punished for it, and now they're watching a competitor operate in the space they refused to enter. That's a hard story to tell to investors and enterprise customers who just watched their vendor get blacklisted by the federal government.

I don't think OpenAI sold out. I think they made a different calculation about where their lines are. But the optics are rough for Anthropic, and the functional question for the industry is: if taking a principled stand on safety costs you this much, who's going to take it next time?

My read on this

I'm going to be direct: I think Anthropic made the right call, and I think it's going to hurt them for a while.

The nuclear strike scenario isn't hypothetical posturing. The Pentagon was using it as a real test case for what they needed Claude to do. Autonomous weapons systems that can plan and execute lethal strikes without human-in-the-loop approval are exactly the kind of technology that, once deployed, cannot be recalled. The safety concerns are not abstract. They're the kind that end with people dead in ways that a refusal policy could have prevented.

Anthropic building their entire company identity around constitutional AI and safety research and then stripping those constraints the moment a large government check shows up would have been a different kind of problem — a credibility problem that would ultimately be more damaging than a federal blacklist.

That said, losing federal contracts is not a rounding error. It affects revenue, it affects hiring, it affects the ability to keep doing the safety research that justified the position in the first place. The lawsuit may win. It may not. Either way, Anthropic just made itself the clearest example in the industry of what it actually costs to hold a safety principle under pressure.

What this means if you're building with AI

If you're building products on top of AI APIs — and if you're reading this, you probably are — this story has a direct implication for how you should be thinking about your foundation models.

The companies that provide your AI layer are making political and ethical decisions that will affect your products. Anthropic's federal blacklist means any business that had integrated Claude into government-facing workflows just had an unexpected infrastructure problem. That's not Anthropic's fault for taking a principled stand, but it is a real risk that wasn't priced into "what model should we use?"

At Defendre Solutions, I think a lot about the AI stack decisions our clients make. Model choice is not just a capability question. It's a question of which company's principles you're betting on, what their regulatory exposure looks like, and whether their product roadmap will exist in two years. Anthropic's situation — whatever you think of their position — is a reminder that those bets are real.

The broader governance question this episode surfaces is one the industry has been avoiding: who decides what AI systems will and won't do at scale? Right now it's a combination of corporate ethics policies and negotiating leverage, which is a terrible answer. The Anthropic-Pentagon fight is the public version of negotiations that are happening constantly, mostly invisibly.

The outcome of the lawsuit matters, but it's not the only thing that matters. What matters more is whether this becomes the moment the industry establishes that safety commitments are real and defensible, or the moment it confirms that every principle has a price.

I think Anthropic knows which story they're trying to write. I just hope they can afford the ending.

Steve Defendre is the founder of Defendre Solutions, an AI consulting firm helping organizations adopt AI tools strategically. He writes about AI, veterans in tech, and the future of work.

Was this article helpful?

Newsletter

Stay ahead of the curve

Get the latest insights on defense tech, AI, and software engineering delivered straight to your inbox. Join our community of innovators and veterans building the future.

Discussion

Comments (0)

Leave a comment

Loading comments...