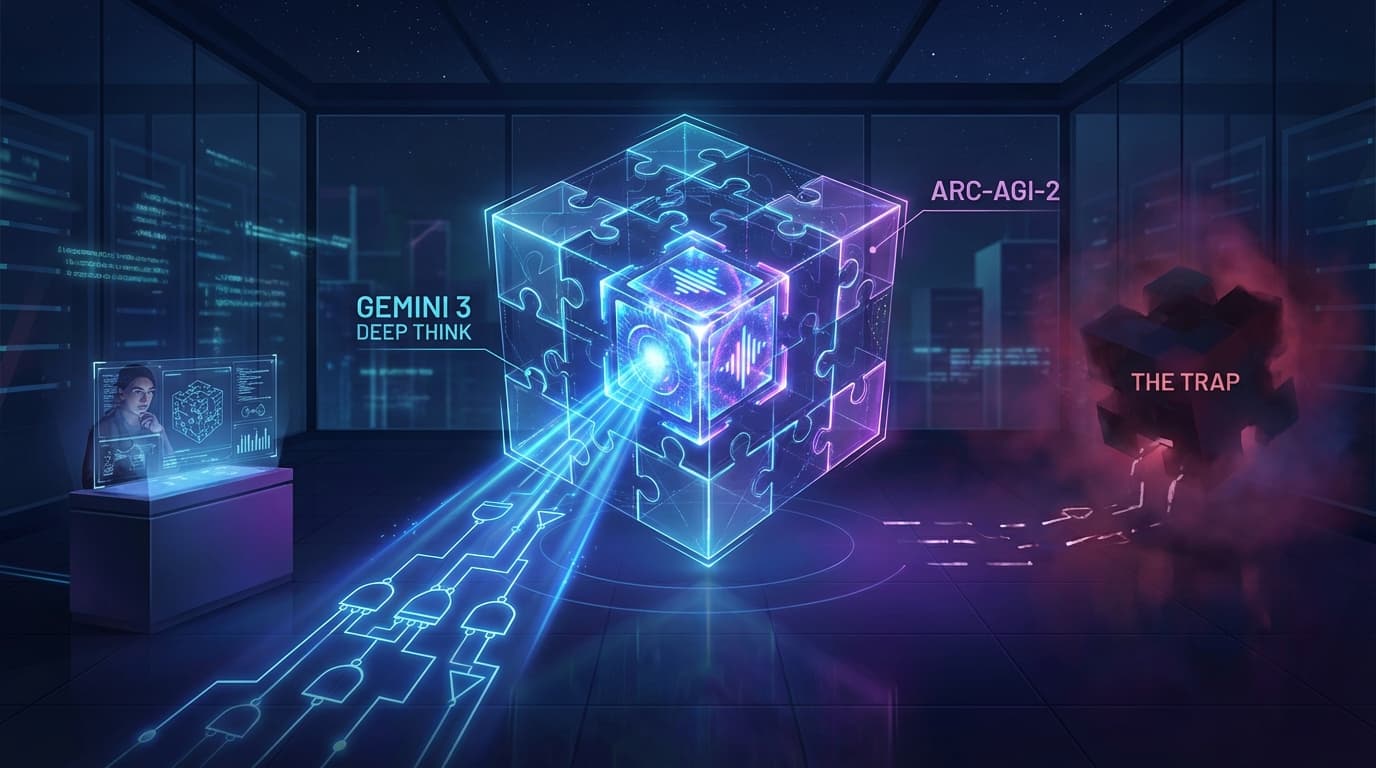

Google's Gemini 3 Deep Think Hits 84.6% on ARC-AGI-2: AGI Breakthrough?

Gemini 3 Deep Think posts serious reasoning gains. Here is the no-hype read on what the benchmark wave means for teams shipping products now.

Gemini 3 Deep Think’s benchmark numbers are strong — but raw scores alone never tell the whole story. What matters is how those gains translate into real execution for teams shipping production systems.

ARC-AGI-2 Is Important — but Context Is Everything

Hitting 84.6% on ARC-AGI-2 is a meaningful signal of improved abstraction and transfer. That matters for domains where the model can’t rely on memorization and has to reason from structure.

Better benchmarks are useful only when they map to workflow reliability.

Where Builders Should Actually Care

-

Complex planning tasks: multi-step tasks with branching logic are improving fast.

-

Tool-using agents: stronger reasoning reduces brittle handoffs between tools.

-

Code quality loops: verification-first pipelines benefit most from better inference depth.

Generalization gains help most when tasks are novel, not repetitive.

The Trap to Avoid

Do not treat benchmark wins as automatic business wins. You still need product constraints, observability, and quality gates. The teams that win this phase won’t be those with the fanciest model name — they’ll be those who build reliable systems around model behavior.

As someone building AI products in the real world, my practical playbook stays the same: benchmark, validate on live tasks, red-team edge cases, then deploy incrementally.

Production advantage comes from disciplined rollout, not leaderboard screenshots.

Bottom line: Gemini 3 Deep Think is a real step forward. Just don’t confuse capability demos with operational readiness. The mission is still execution.

Was this article helpful?

Newsletter

Stay ahead of the curve

Get the latest insights on defense tech, AI, and software engineering delivered straight to your inbox. Join our community of innovators and veterans building the future.

Discussion

Comments (0)

Leave a comment

Loading comments...