Gemini Just Made Chrome the AI Browser Everyone Else Gave Up On

Google embedded Gemini directly into Chrome this week, rolling out globally. Summarization, shopping, cross-app context. Plus Meta's AI glasses have a privacy problem nobody wants to talk about.

The AI browser war is over and most people won't even notice it happened.

Google shipped Gemini inside Chrome this week. Not as an extension. Not as a sidebar experiment. As a native feature, rolling out globally to billions of users who already have Chrome installed. By the time competitors figure out their response, most of the internet will already be using an AI-powered browser without having made a conscious choice to do so.

That's the whole play. And it's working.

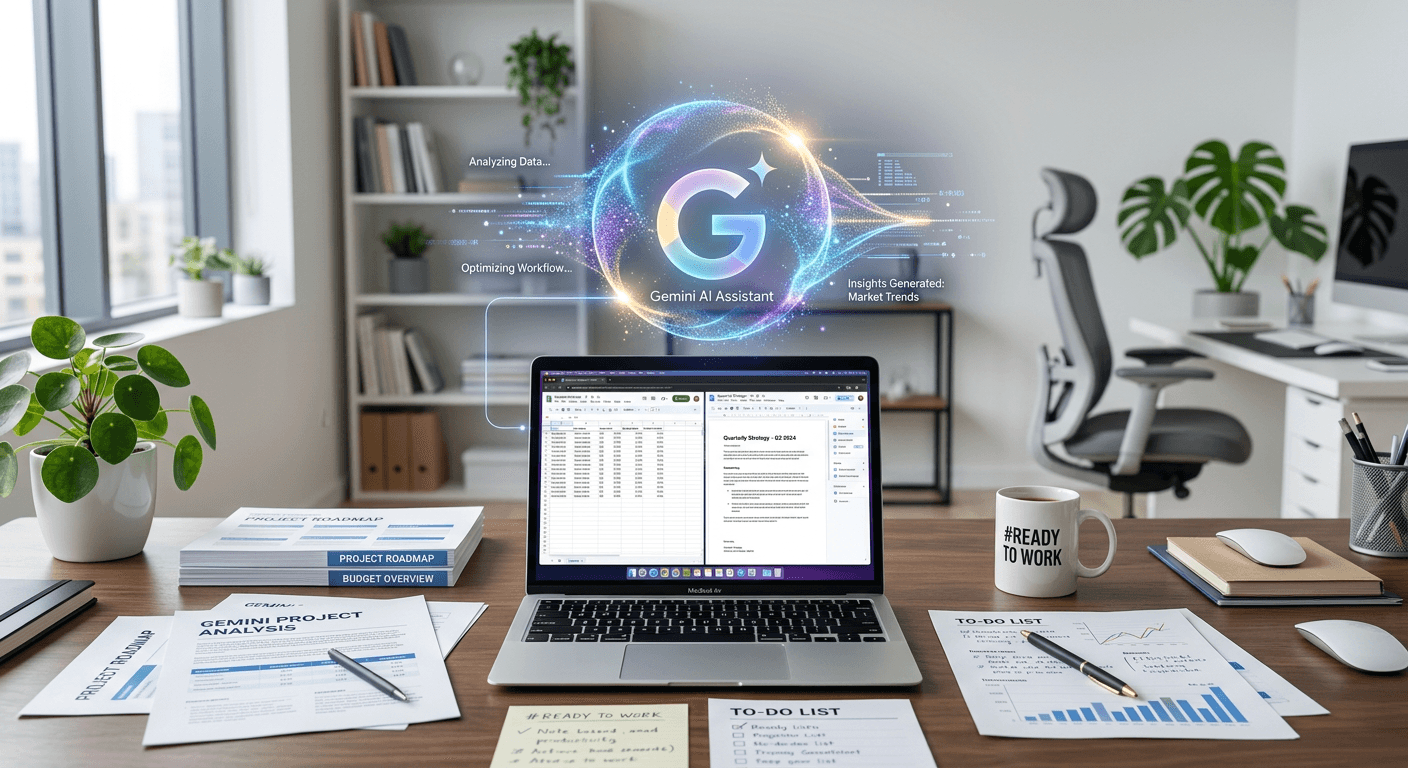

What Chrome actually does now

Here's what Gemini in Chrome can do as of this week: summarize any page you're on, compare products across tabs while you shop, answer questions about what you're reading, and pull context from your Gmail, Drive, and Calendar simultaneously. If you're an AI Ultra or Pro subscriber, it goes further with Workspace integration announced March 10.

The shopping piece alone is worth paying attention to. You can ask Chrome to find you a better deal on whatever you're looking at, and it will search, compare, and explain the tradeoffs. That's not a demo. That's real behavior change for hundreds of millions of people.

I keep thinking about what this means for the companies that spent the last two years building standalone AI browser products. Arc had ideas. Brave tried. Opera bolted on a chatbot. None of them have Chrome's distribution. Chrome owns roughly 65% of the browser market. When you can reach that many people by flipping a server-side flag, the competitive dynamics are just brutal.

The Workspace angle is bigger than it looks

The Chrome integration is the headline, but the Workspace updates that dropped alongside it might matter more for anyone running a business.

Gemini can now pull from your Docs, Sheets, Slides, Drive files, emails, and the open web in a single query. That's cross-app context that actually works, not the fragmented "ask each app separately" approach we've been stuck with. You can sit in a Google Doc and ask Gemini to find that spreadsheet your colleague sent last Tuesday, pull the Q1 numbers, and draft a summary. One prompt. No tab switching.

For teams already on Google Workspace, this removes a real friction point. For teams on Microsoft 365, it's a reason to reconsider. Microsoft has Copilot, sure, but Google just made the integration feel seamless in a way that Copilot still doesn't quite manage.

I'm not saying Google Workspace is better than Microsoft 365. I'm saying the AI layer on top of Workspace just got meaningfully better this week, and that gap matters when companies are making platform decisions.

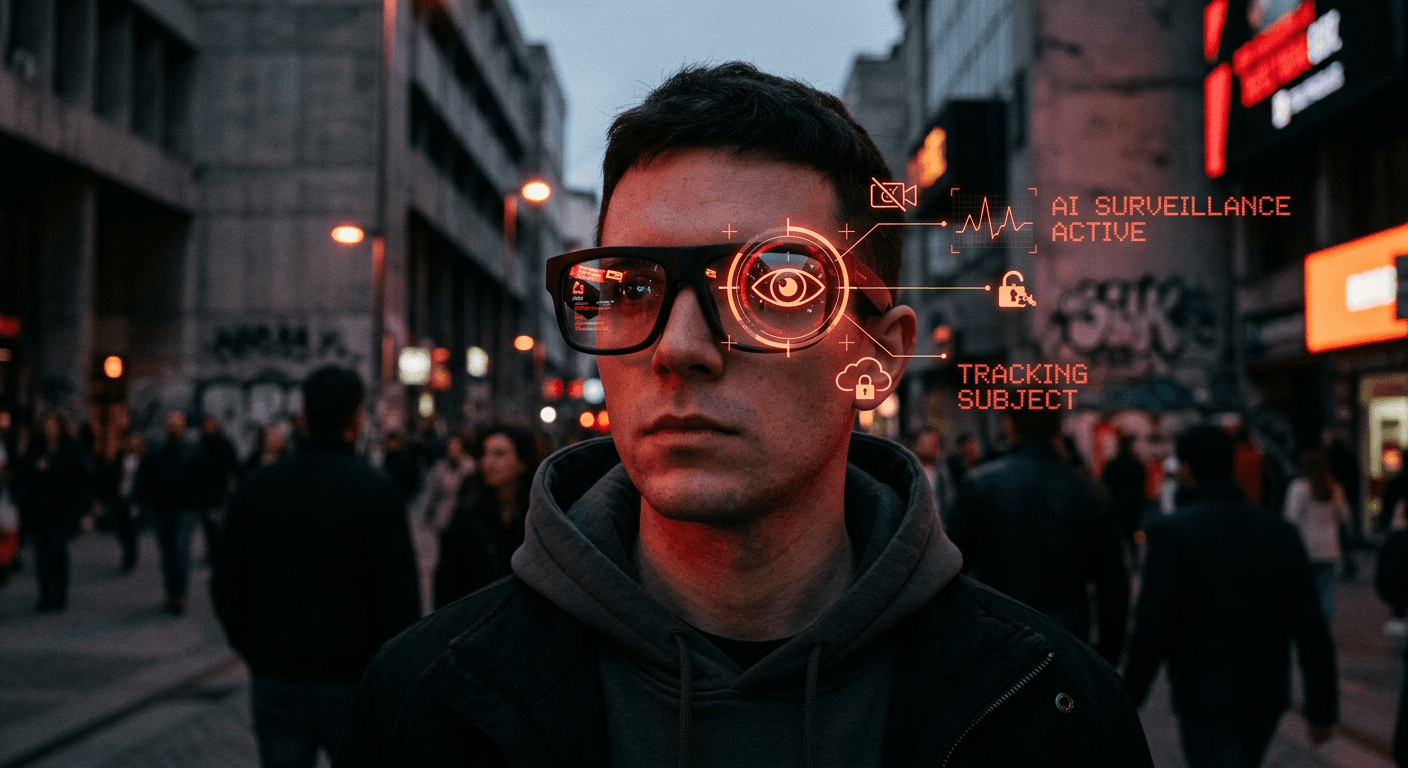

Meta's AI glasses and the consent problem

Meanwhile, Meta is shipping Gen 2 Ray-Bans with upgraded AI capabilities, and I think we need to talk about what happens when the camera faces outward.

The wearer gets a personal AI assistant that can see and hear their environment. Cool. But everyone around them becomes an unwitting participant in a persistent AI observation system. The person sitting across from you at a coffee shop didn't agree to have their face processed by Meta's models. The colleague in your meeting didn't consent to having their words analyzed in real time.

Privacy has always been framed as an individual choice: you decide what data you share about yourself. AI glasses break that framing completely. The wearer's choice to use the device makes a privacy decision for every person in the room. That's a different kind of problem, and the current regulatory frameworks aren't built for it.

I don't think Meta is evil for building these. I do think we're going to look back at this period and wonder why we didn't have the consent conversation sooner. Right now, the social norms around AI glasses are basically nonexistent. People don't know when they're being recorded, processed, or analyzed. That ambiguity is going to create problems.

Nvidia GTC and the infrastructure bet

Quick note on Nvidia, because Jensen Huang's framing ahead of next week's GTC conference is telling. He called AI "foundational infrastructure," which is the kind of language you use when you want governments and enterprises to think of your products like roads and power grids.

The Rubin supercomputer platform announcement is expected at GTC. If Nvidia delivers what's been rumored, we're looking at another generation of hardware that widens the gap between Nvidia and everyone else in the AI compute space. AMD and Intel keep making progress, but Nvidia's software ecosystem (CUDA, the whole stack) is the real moat. Hardware specs matter less than the fact that every ML framework is optimized for Nvidia first.

Britain's datacentre problem

And because no AI news roundup is complete without a cautionary tale: the British government's much-publicized AI investment push hit a snag this week. Several of the promised datacentres apparently don't exist yet, despite being counted in the investment totals.

This is the pattern with government AI strategies. Big numbers get announced. Press releases go out. Then someone checks whether the buildings are actually there. I've seen this play out in multiple countries now. The political incentive is to announce the investment. The operational reality of building datacentres (power, planning permission, cooling, staffing) moves on a completely different timeline.

It doesn't mean the UK won't build the infrastructure eventually. It means the gap between announcement and reality is wider than anyone in government wants to admit.

What I'm watching

Google just made the most consequential AI distribution move of the year by putting Gemini in Chrome. Not because the technology is necessarily better than what Anthropic or OpenAI offers, but because distribution wins. Chrome is already on the devices. The AI just showed up.

The Meta glasses situation is going to get messier before any norms settle. And Nvidia is about to remind everyone why the picks-and-shovels business is still the best business in AI.

If your team is evaluating how these shifts affect your stack or your product strategy, reach out. This is what we work on.

Was this article helpful?

Newsletter

Stay ahead of the curve

Get the latest insights on defense tech, AI, and software engineering delivered straight to your inbox. Join our community of innovators and veterans building the future.

Discussion

Comments (0)

Leave a comment

Loading comments...