Pentagon Bans Anthropic: What It Means When Washington Picks AI Winners

The Pentagon just classified Anthropic as a supply-chain risk to national security, forcing defense contractors to purge Claude from their systems. OpenAI gets the green light. Meanwhile, hundreds march through London screaming "Stop the slop." Welcome to March 2026.

I wrote about the Anthropic-Pentagon standoff four days ago. I said it would escalate. I didn't think it would escalate this fast.

Today, Defense Secretary Pete Hegseth signed a formal directive classifying Anthropic's entire product suite as a "supply-chain risk to national security." That's not a policy disagreement anymore. That's the same designation we use for Huawei. For Kaspersky. For companies the US government considers active threats.

Anthropic. A company founded in San Francisco by ex-OpenAI researchers who left because they wanted to build AI more safely.

Let that sink in.

What the ban actually does

The directive requires all Department of Defense contractors, subcontractors, and suppliers to remove Anthropic products from any system that touches classified or controlled unclassified information. That includes Claude, the API, any fine-tuned models, and any tooling built on top of Anthropic's infrastructure.

The timeline is 90 days for primary contractors and 180 days for downstream suppliers. That's tight. If you've ever worked in defense procurement, you know that swapping out a major software dependency in that window is not just expensive. It's chaos.

Lockheed Martin confirmed this afternoon that they've already begun what they're calling an "accelerated vendor transition." Raytheon and Northrop Grumman haven't issued public statements yet, but sources inside both companies say the same process is underway. Years of integration work, internal tooling, custom workflows. All of it getting ripped out.

And here's the part that should make you uncomfortable: the directive doesn't cite a single specific security vulnerability. No breach. No data exfiltration. No technical failure. The entire justification is that Anthropic's refusal to comply with Pentagon requirements for unrestricted military AI use makes them an unreliable partner, and therefore a supply-chain risk.

Read that again. They're being banned not because their technology is dangerous, but because they said no.

OpenAI steps into the spotlight

The timing is not subtle. The same week Anthropic gets blacklisted, OpenAI announced an expanded partnership with the Department of Defense. New enterprise agreements. Dedicated government instances of GPT-5.3. Custom fine-tuning for defense applications with, presumably, fewer guardrails than what Anthropic was willing to provide.

Sam Altman released a statement about "responsible partnership with democratic institutions." I'll let you interpret the word "responsible" there however you want.

The irony is thick enough to cut. OpenAI, the company that went through a board coup, that has faced repeated criticism for rushing deployments, that has an ongoing FTC investigation, that Sam Altman himself was fired from and then un-fired from in the span of a weekend. That company is now the Pentagon's preferred AI partner because Anthropic was too principled.

I'm not saying OpenAI's technology is bad. I use their models regularly. But the selection criteria here has nothing to do with technical merit and everything to do with compliance. The Pentagon wanted a vendor that would say yes. OpenAI said yes.

Meanwhile, in London

While Washington was busy picking winners and losers in the AI industry, something different was happening across the Atlantic.

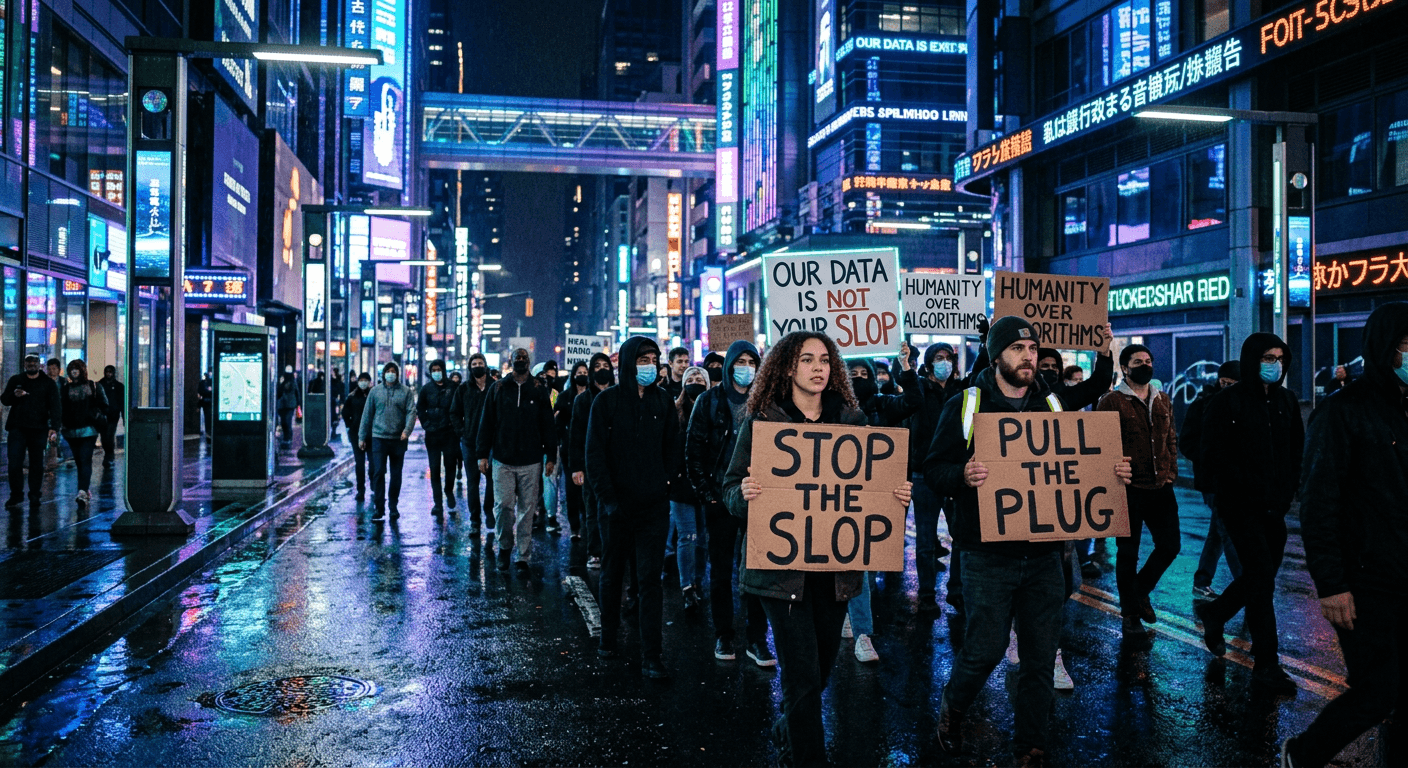

Hundreds of people marched through King's Cross in London yesterday, right through the heart of the city's tech district. The chants were simple: "Pull the plug! Stop the slop!" The crowd was a mix you don't usually see together. Union workers worried about automation. Artists angry about training data. Privacy advocates. Parents concerned about kids and AI content. A few actual Luddites, and I mean that respectfully. They had signs and everything.

What struck me about the London protest is how it sits in direct tension with the Pentagon story. In Washington, the concern is that one AI company isn't cooperative enough with military applications. In London, the concern is that AI companies are too powerful, too fast, too unaccountable. The protesters aren't making a distinction between Anthropic and OpenAI. To them, it's all the same machine.

They're not entirely wrong.

Both stories are about the same thing: who controls AI, and who gets to decide what it's used for. The Pentagon wants control through procurement leverage. The London protesters want control through collective action. Neither group trusts the companies to regulate themselves, and at this point, why would they?

What this means if you build on Anthropic

I run an AI consultancy. I have clients who use Claude in their workflows. Some of them are in defense-adjacent industries. So let me be direct about what changed today.

If you are a defense contractor or subcontractor, you need to start your migration plan now. Not next quarter. Now. The 90-day clock is already ticking, and if you think you'll get an extension, you probably won't. The current administration has shown zero interest in flexibility on this.

If you're in a regulated industry that isn't directly defense but has government contracts, watch carefully. Supply-chain risk designations have a way of spreading. What starts at DoD often moves to DHS, DOE, and other agencies. I wouldn't be surprised to see broader restrictions within the year.

If you're a startup or small business with no government exposure, this doesn't change your technical calculus today. Claude is still an excellent model. But it does change your risk calculus. Anthropic is now in a legal fight with the federal government. That fight will consume resources, attention, and potentially affect their ability to operate if it goes badly.

The bigger picture

This is the politicization of AI infrastructure. Full stop. A US-founded company is being treated like a foreign adversary because it maintained safety principles that the government found inconvenient. That is a new thing. You can argue about whether Anthropic's principles are correct. You can argue about whether they should have found a middle ground. But using national security law to punish a domestic company for saying no to a specific use case? That's a hell of a precedent.

It also sends a clear message to every other AI company: comply or get cut out. That message will change behavior. The next time a lab is asked to remove safety guardrails for a government application, they're going to remember what happened to Anthropic. Some will hold the line. Most won't.

And in London, people are marching because they feel like they have no say in any of this. They're right to feel that way. Whether you're a defense contractor being told which AI vendor to use, or a citizen watching AI reshape your industry without your input, the common thread is the same. The decisions are being made somewhere else, by people who aren't asking what you think.

I don't have a clean answer for how this resolves. What I know is that the AI industry just got a lot more political, and if you're building a business on any of these tools, you need to factor that into your planning. Not as a hypothetical. As a present reality.

Today, Washington drew a line. London drew a different one. And every AI company and every business that depends on them is standing somewhere in between.

Was this article helpful?

Newsletter

Stay ahead of the curve

Get the latest insights on defense tech, AI, and software engineering delivered straight to your inbox. Join our community of innovators and veterans building the future.

Discussion

Comments (0)

Leave a comment

Loading comments...